Insygna published a framework identifying the critical gaps that emerge when existing enterprise AI governance approaches are applied to agentic systems, and outlining the infrastructure required to close them.

Enterprise AI governance has a blind spot. Most of the frameworks, tools, and regulatory guidance published over the past two years were written with a specific type of AI in mind: a model that receives a prompt, generates a response, and stops. Agentic AI systems, those that plan, execute multi-step tasks, call external tools, and operate with minimal human supervision, were largely an afterthought.

Insygna published a framework identifying the critical gaps that emerge when existing enterprise AI governance approaches are applied to agentic systems, and outlining the infrastructure required to close them. This is available now to early access closed beta partners and will soon become generally available.

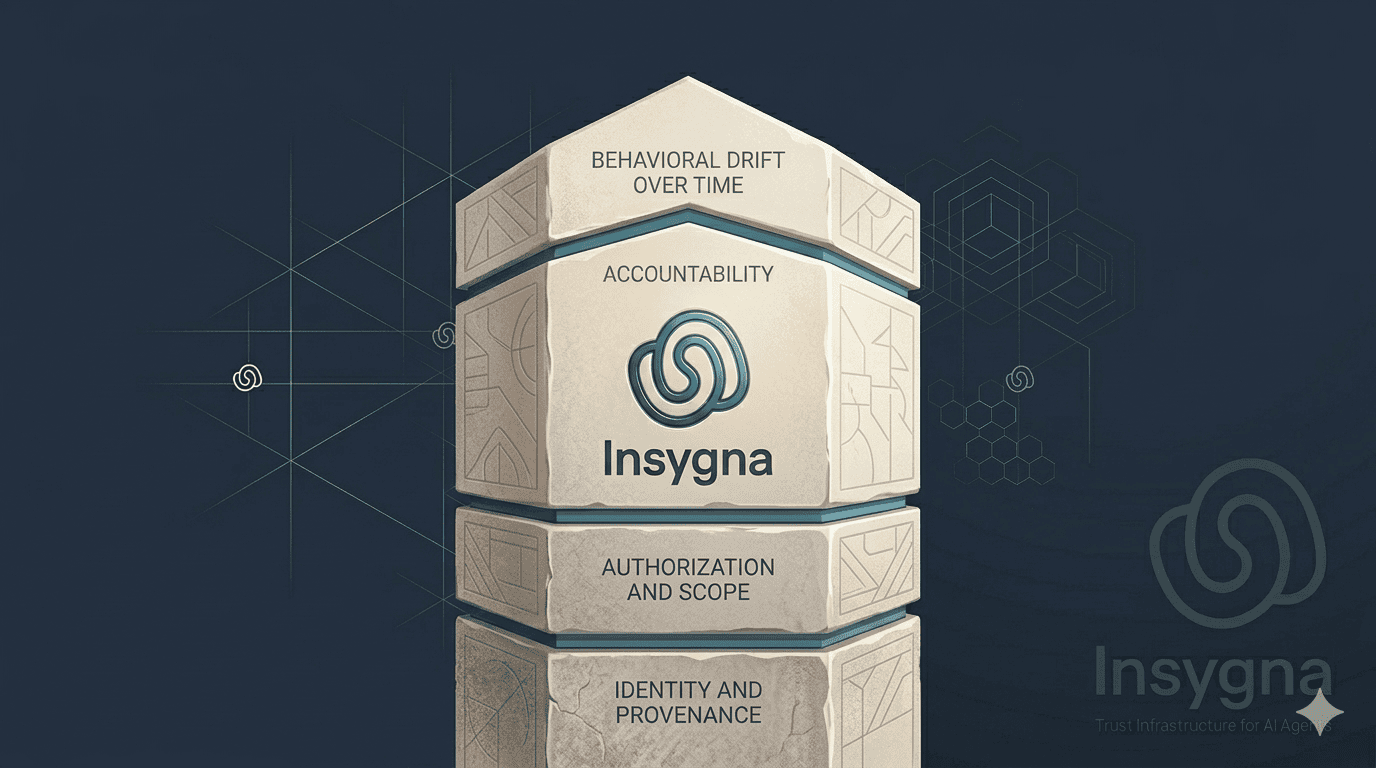

The framework identifies four areas where current governance practice falls short.

Identity and provenance. Existing AI governance frameworks focus primarily on model evaluation and output monitoring. They assume the system being governed is a fixed artifact with a known configuration. Agentic systems do not behave that way. An agent can be instantiated from a base model, modified with a custom system prompt, equipped with tool access, and deployed without any of those changes being visible to the enterprise procurement or compliance team that approved the original integration. Without a persistent, verifiable identity tied to a specific agent configuration at a specific point in time, governance reviews conducted before deployment provide limited protection against what actually runs in production.

Authorization and scope. Human workforce governance relies on role-based access control, manager approval chains, and documented scope of work. When an agent exceeds its authorized scope, there is typically an observable action, a transaction, a communication, or a file access that can be audited after the fact. Agentic systems operating through API calls and tool invocations can exceed their intended scope at machine speed, across multiple systems simultaneously, in ways that do not trigger standard access logs or produce human-readable audit trails without purpose-built instrumentation.

Accountability when things go wrong. Enterprise risk management assumes that when harm occurs, there is a traceable chain of responsibility. For human workers that chain runs through employment relationships, professional licenses, and legal frameworks. For AI agents it currently runs through a tangle of vendor contracts, terms of service agreements, and foundation model provider policies that were not designed to assign liability for specific autonomous actions. Regulators including the Colorado AI Act and the EU AI Act are beginning to address this, but the compliance infrastructure required to satisfy those obligations does not yet exist in any standardized form.

Performance and behavioral drift over time. A credential or compliance review conducted at the point of procurement tells an enterprise what an agent was doing when it was evaluated. It does not tell them what the agent is doing six months later after the underlying model has been updated, the system prompt has been revised, or the tool integrations have changed. Human workforce governance addresses this through ongoing performance management and periodic re-credentialing. No equivalent infrastructure exists for AI agents at scale.

The Insygna framework proposes that enterprises treat these four gaps not as security problems to be patched but as structural requirements for a new category of workforce infrastructure: persistent agent identity, real-time authorization monitoring, standardized accountability chains, and continuous performance verification against registered baselines.

"The governance frameworks most enterprises are using today were written for a world where AI was a feature inside a product," said Michael Beygelman, co-founder and CEO of Insygna. "Agentic AI is not a feature. It is a workforce. And workforces require infrastructure that governance checklists cannot replace."